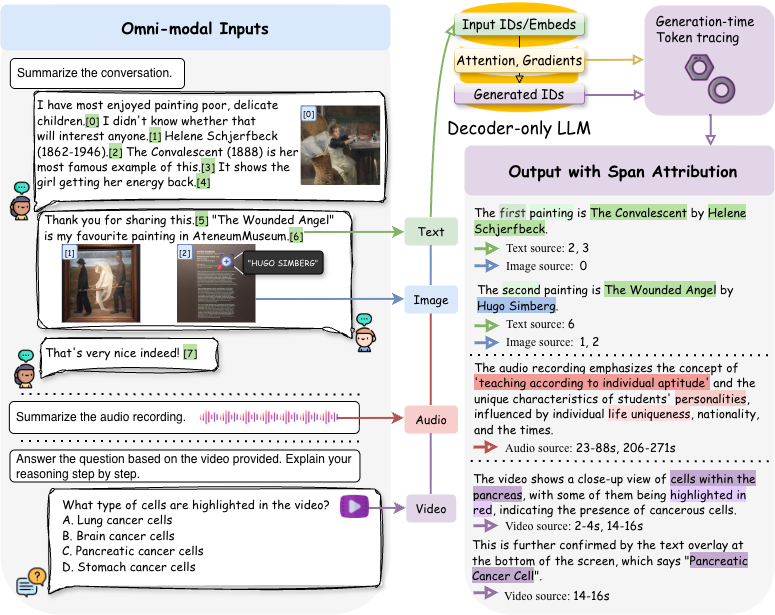

Modern multimodal large language models generate fluent responses from interleaved text, image, audio, and video inputs, but it remains difficult to determine which specific inputs support each generated statement. Existing attribution methods are largely built for classification settings, fixed prediction targets, or single-modality architectures, and do not naturally extend to autoregressive, decoder-only multimodal generation.

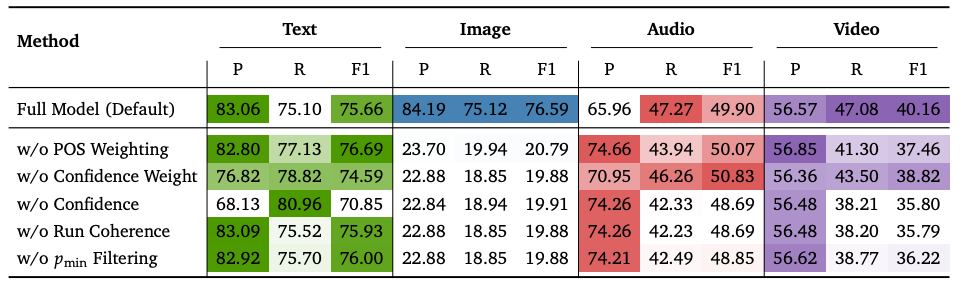

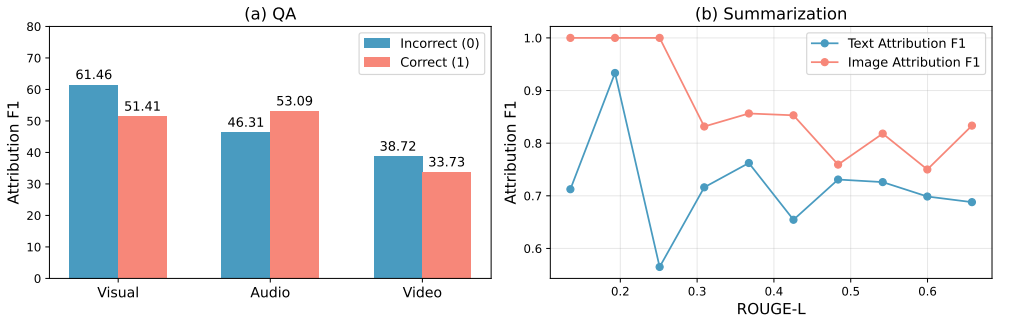

OmniTrace addresses this by formalizing attribution as a generation-time tracing problem over the causal decoding process. Instead of producing explanations only after the final response is complete, OmniTrace follows generation token by token, converts arbitrary token-level signals such as attention or gradients into source assignments, and aggregates them into stable, semantically coherent span-level explanations.

The framework is designed to be lightweight, plug-and-play, and model-agnostic. It does not require retraining or supervision, and it supports heterogeneous source units across modalities, including text spans, image regions, audio intervals, and video intervals.